Essay

The Scaling Imperative: A First-Principles Comparison of Human-Cognitive and Integrated Computational Networks for Planetary-Scale Intelligence

Abstract

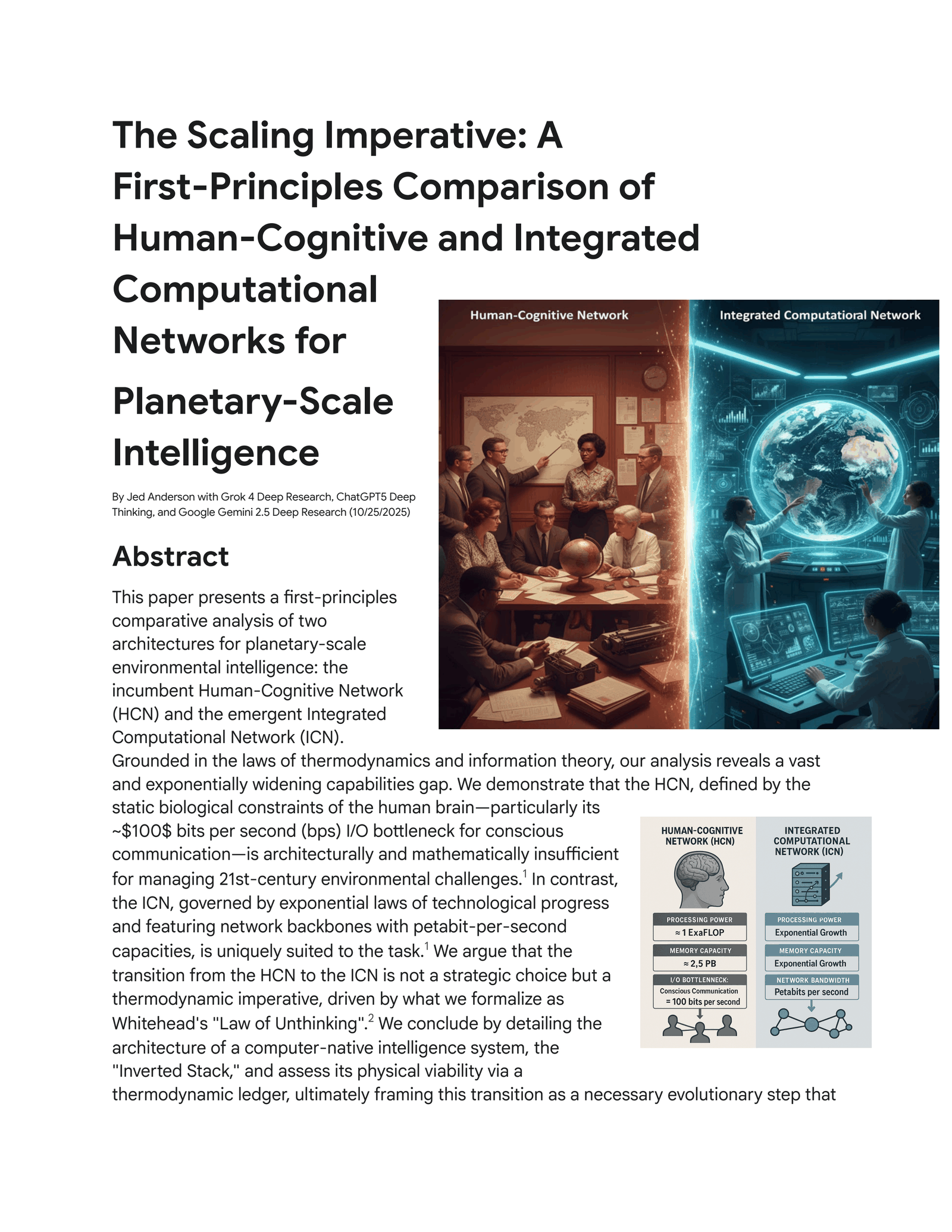

This paper presents a first-principles comparative analysis of two architectures for planetary-scale environmental intelligence: the incumbent Human-Cognitive Network

(HCN) and the emergent Integrated Computational Network (ICN).

Grounded in the laws of thermodynamics and information theory, our analysis reveals a vast and exponentially widening capabilities gap. We demonstrate that the HCN, defined by the static biological constraints of the human brain—particularly its

~100 bits per second (bps) I/O bottleneck for conscious communication—is architecturally and mathematically insufficient for managing 21st-century environmental challenges.1 In contrast, the ICN, governed by exponential laws of technological progress and featuring network backbones with petabit-per-second capacities, is uniquely suited to the task.1 We argue that the transition from the HCN to the ICN is not a strategic choice but a thermodynamic imperative, driven by what we formalize as

Whitehead’s “Law of Unthinking”.2 We conclude by detailing the architecture of a computer-native intelligence system, the

“Inverted Stack,” and assess its physical viability via a thermodynamic ledger, ultimately framing this transition as a necessary evolutionary step that elevates the human role from operational node to strategic and ethical architect of a thriving planet.2

Part I: An Architecture of Biological Limits: The

Human-Cognitive Network (HCN)

The Human-Cognitive Network (HCN) is the de facto global intelligence system, composed of approximately eight billion individual human brains communicating through evolved and learned protocols.1 While a product of millions of years of successful evolution, its architecture is defined by biological constraints that render it fundamentally unscalable for the precise, high-volume data tasks required by modern planetary management. A first-principles deconstruction of the HCN reveals a system that is not flawed, but rather a highly optimized evolutionary product whose design parameters are fundamentally misaligned with the requirements of our current data-intensive era.

The Individual Node: The Human Brain as a Computational Unit The human brain is a paradoxical device: a marvel of low-power, massively parallel computation that is simultaneously a severely constrained network component.1

Understanding this duality is critical to appreciating its profound limitations in the context of a planetary-scale information network.

The brain’s raw computational prowess is staggering. Based on its approximately 86 billion neurons and the trillions of synaptic connections between them, its processing power is estimated to be on par with the world’s fastest supercomputers, at approximately 1 ExaFLOP, or 10¹⁸ floating-point operations per second.1 It achieves this feat while consuming only about 20 watts of power, a level of energy efficiency that is orders of magnitude greater than any engineered computer.1 In terms of storage, credible neuroscientific research, such as that from Paul Reber of Northwestern University, places the brain’s theoretical capacity at a vast

2.5 petabytes (PB).1 However, this immense potential is not analogous to a digital hard drive. Human memory is not a static, reliable storage medium; it is dynamic, associative, and inherently lossy.1

Neurobiological processes of memory consolidation are selective and stochastic, with research suggesting that only a small fraction of daily experiences are encoded into long-term memory.1 Furthermore, information recall is imperfect and subject to degradation over time. Studies have shown that humans can forget up to 70% of new information within 24 hours.1 This high rate of data loss and corruption makes the brain an unreliable repository for the kind of precise, high-fidelity datasets essential for scientific analysis.

These specifications are not accidental. They are the product of millions of years of evolution optimizing for a specific task: real-time survival in a complex physical environment. The architecture is designed to process high-bandwidth sensory data for immediate pattern recognition—such as identifying a predator’s movement in a dense forest—not to store and recall petabytes of abstract symbolic data with perfect fidelity. This evolutionary purpose is the root cause of its unsuitability for modern scientific computation. The brain is not a flawed general-purpose computer; it is a perfect, highly specialized survival engine being misapplied to a task for which it was never designed.1

The Critical Bottleneck: Input/Output Bandwidth for Conscious Thought The brain’s most profound and non-negotiable limitation as a network node is its extremely narrow channel for conscious data transfer. This input/output (I/O) bottleneck cripples its ability to function effectively in a data-rich network and represents the system’s fatal flaw.2

While the human sensory system is a high-bandwidth interface, gathering an estimated 11 million bits per second (bps) of data from the environment, the conscious mind—the seat of deliberate analysis and abstract thought—can process only a tiny fraction of this input.1

Research indicates this conscious processing rate is between 10 and 50 bps.1 This represents a massive, multi-million-to-one compression ratio, where a torrent of sensory data is filtered and reduced to a trickle for conscious consideration.

The output channels for communicating this consciously formulated information are similarly constrained. The average rate of human speech, between 140 and 160 words per minute

(wpm), translates to a bandwidth of approximately 100 bps, as shown by the following calculation 1:

(150 words/min ÷ 60 s/min) × 5 characters/word × 8 bits/character ≈ 100 bits/second

The data rate for an average typist is a mere 27 bps, while even a fast professional typist struggles to exceed 50 bps.1 The primary channel for high-volume data intake, silent reading, averages around 159 bps.1

When the brain’s 1 ExaFLOP internal processing power is juxtaposed with its ~100 bps external communication bandwidth, the absurdity of the architecture for data-intensive tasks becomes clear. This is not a minor limitation; it is a fundamental design flaw for this specific application. The human brain is effectively an Exascale computer trapped behind a 100-baud modem.2 This reframes the problem of planetary management from “humans aren’t smart enough” to “human biology is not architected for high-bandwidth data networking.”

The Network Protocol: The Inefficiencies of Human Communication When individual human nodes connect to form the HCN, the “network protocols” of language and social interaction are plagued by high latency, low bandwidth, and a profound rate of data corruption when compared to engineered systems.1

Communication is subject to immense delays, not from the physical propagation of sound or light, but from cognitive processing (the time required to formulate a thought or response) and social queuing (the time spent waiting for a turn to speak in a meeting, waiting for an email reply, or for a peer-reviewed paper to be published).1 These delays are measured in seconds, minutes, hours, or even months, creating a system that is incapable of real-time, large-scale coordination.1

Unlike error-correcting digital protocols, human communication is a profoundly lossy network.

Data is corrupted at the receiving node through misinterpretation, where ambiguous language, cultural differences, and personal biases can distort the intended meaning.1

Furthermore, as information passes through a chain of human nodes, it is subject to degradation, simplification, and embellishment at each step, a phenomenon known as an information cascade.1 This is analogous to a signal accumulating noise with each hop in a network without repeaters.

The HCN is not a single, cohesive global network. It is better understood as millions of small, high-trust “local area networks” (LANs)—research labs, small companies, community groups—that operate with relative efficiency due to shared context and trust. However, the

“wide area network” (WAN) links between these groups, such as academic papers and international conferences, are characterized by extreme latency and low bandwidth. This architectural flaw makes truly integrated, real-time global coordination physically impossible.1

The Sociological Scaling Barrier: Dunbar’s Number and Coordination Costs Beyond the technical limitations of communication, the HCN’s ability to scale is constrained by fundamental cognitive and sociological limits on the size of effective, cohesive groups.1

British anthropologist Robin Dunbar proposed a cognitive limit to the number of people with whom an individual can maintain stable social relationships, a figure commonly cited as approximately 150.1 Dunbar theorized this limit is a direct function of the size of the human neocortex. Beyond this number, social cohesion breaks down, and groups require formal hierarchies, laws, and enforced rules to maintain stability, which introduces significant organizational overhead and reduces efficiency.1

As the size of a group increases, the number of potential communication links between its members grows exponentially, leading to a non-linear increase in the time and energy required for coordination.1 This “coordination cost” can quickly consume a group’s entire productive capacity, diminishing overall productivity. Dunbar’s number is not just a sociological curiosity; it is a hard, biological constraint on the scalability of any system that relies on human trust and informal coordination. It implies that our brains are simply not wired to manage the large, impersonal networks required for global-scale projects. This provides a first-principles, neurobiological explanation for why top-down, large-scale human governance systems are so often inefficient and prone to failure.1

Part II: An Architecture of Exponential Growth: The

Integrated Computational Network (ICN)

In stark contrast to the static, biologically constrained HCN, the Integrated Computational

Network (ICN) is an engineered system designed explicitly for precision, speed, and, most critically, predictable exponential scalability. Its components and the network that connects them are governed by laws of technological progress that continuously and relentlessly expand its capabilities.

The Individual Node: The Modern Compute Unit and Lossless Storage System The fundamental node of the ICN is the modern server or supercomputer—a fully programmable, high-fidelity, and modular component whose capabilities are continuously improving.1

The world’s leading supercomputers now operate at the Exascale. As of the June 2025

TOP500 list, the El Capitan system at Lawrence Livermore National Laboratory achieves a performance of 1.742 ExaFLOPs on the standard LINPACK benchmark.1 This means a single machine can match the estimated raw processing power of a human brain, but in a fully programmable and directable form that can be precisely focused on a specific problem, such as running a high-resolution climate model.1

The scale of digital data storage dwarfs the biological capacity of humanity. Global digital data storage is projected to exceed 200 zettabytes (ZB) by 2025.1 This is at least ten times the total theoretical storage capacity of all 8 billion human brains combined, which is approximately 20 ZB (8 × 10⁹ people × 2.5 × 10⁻⁶ ZB/person).1 Unlike the malleable and degradable nature of human memory, digital storage is designed for perfect, lossless replication, ensured by layers of error-correction code. This property is non-negotiable for science, as it allows for the creation of a definitive, verifiable, and shared “source of truth” for data.1

The most profound difference between a brain and a computer is not raw power but modularity. The brain is a monolithic, closed system; its core components cannot be upgraded or reconfigured. In contrast, the ICN is built on standardized, modular components (CPUs,

GPUs, RAM, storage) that can be upgraded and reconfigured in virtually limitless combinations because they all adhere to standardized communication protocols like TCP/IP.1

This makes the ICN not just a collection of powerful computers, but a single, reconfigurable, globally distributed supercomputer that can be precisely tailored to meet the demands of any scientific problem—an adaptability the static, monolithic architecture of the brain can never achieve.

The Network Substrate: The Global Fiber-Optic Fabric The fiber-optic networks connecting the ICN’s nodes provide a near-instantaneous, high-fidelity fabric for data exchange that effectively eliminates geography as a primary constraint on collaboration and computation.1

The data rates achievable in modern fiber-optic networks are difficult to comprehend. Recent research demonstrations in 2025 have achieved transmission speeds of 1.02 petabits per second (Pb/s) over a multi-core fiber.1 A separate experiment reached 402 terabits per second (Tb/s) over standard commercial fiber.1 To put this in perspective, one petabit per second (10¹⁵ bps) is more than ten trillion (10¹³) times faster than the ~100 bps data rate of human speech.1

In a fiber-optic network, latency is primarily limited by the speed of light in glass, which is roughly two-thirds the speed of light in a vacuum. This results in delays of approximately 5 microseconds (5 μs) for every kilometer of distance, allowing for transcontinental data transmission in mere milliseconds.1 Digital communication protocols such as TCP/IP are designed with robust, built-in error detection and correction mechanisms, ensuring that data arriving at a destination is an exact, bit-for-bit replica of the data that was sent.1

The combination of extreme bandwidth and ultra-low latency fundamentally transforms the nature of collaboration. In the HCN, collaboration is easiest and most efficient among physically co-located individuals. In the ICN, the physical location of data, computation, and human expertise becomes largely irrelevant. This “death of distance” effectively “compresses” the planet, enabling the creation of a truly integrated global intelligence system, a feat the

HCN, forever bound by the friction of geography, can never replicate.1 The Governing Dynamics: Moore’s, Kryder’s, and Nielsen’s Laws of

Exponential Progress The capabilities of the ICN are not static; they are governed by well-established laws of exponential technological progress that ensure a continuous and predictable expansion of its power.1

The original 1965 observation by Gordon Moore stated that the number of transistors on an integrated circuit doubles approximately every two years.1 While the pace of simple geometric scaling has slowed, the spirit of Moore’s Law continues in what is now termed “system scaling”.1 Performance gains are now driven by architectural innovations like chiplet-based designs and 3D stacking technologies, which continue to drive exponential improvements in performance, density, and energy efficiency.1

This is complemented by Kryder’s Law, an observation analogous to Moore’s Law for magnetic storage, which states that the areal density of hard drives doubles roughly every 13 months.1 This trend has driven the exponential decrease in the cost of data storage, making the retention of massive environmental datasets economically feasible. Finally, Nielsen’s Law of Internet Bandwidth, articulated in 1998, observes that the connection speed for high-end users grows by 50% per year.1 This ensures that the network’s capacity can keep pace with the exponentially growing volumes of data generated by sensors and scientific instruments.

The exponential improvement of the ICN is a powerful real-world example of the core thesis driving this transition. The “unthinking” operation of chip design was itself automated and accelerated by the very computers it was creating. This established a powerful positive feedback loop that drives the system’s relentless advance. The ICN is not just a tool; it is a self-accelerating engine of progress.2

Part III: A Quantitative Chasm and the

Thermodynamic Imperative for Transition Synthesizing the analyses of the HCN and ICN reveals a capabilities gap that is not only immense but widening at an accelerating rate. This section quantifies that gap and introduces the fundamental physical principle that makes the transition between these two systems an inevitability.

Direct Comparative Analysis: A Juxtaposition of Architectures A side-by-side comparison of the HCN and ICN across every key metric reveals a performance gap of many orders of magnitude. This makes the choice between them for data-intensive tasks a matter of physical reality, not preference. The following tables distill the preceding analysis into a stark, quantitative juxtaposition, serving as the central, undeniable evidence for this paper’s thesis.

Table 1 provides a comparison at the level of the individual computational node. It highlights the fundamental trade-offs and demonstrates why, for tasks involving large-scale data processing and communication, the engineered system is superior on every relevant metric except for raw power efficiency.

Table 1: The Individual Computational Node: Human Brain vs. 2025 ICN Node Metric Human Brain 2025 ICN Node Magnitude of

(HCN Node) (e.g., HPC Server) Difference (ICN vs. HCN)

- Processing Speed: Human brain ~1 ExaFLOP (estimated, highly parallel); 2025 ICN node multi-PetaFLOPs to ExaFLOPs (programmable); difference ~1000× for specific tasks.

- Storage Capacity: Human brain 2.5 PB (theoretical, volatile); ICN node terabytes of RAM, petabytes of attached storage; comparable, but ICN is stable and expandable.

- Power Consumption: Human brain ~20 Watts; ICN node kilowatts to megawatts; ~10⁵ to 10⁶ times higher for the ICN node.

- Communication I/O: Human brain 10–160 bps (conscious thought, speech); ICN node >400 Gbps (e.g., Infiniband); >10⁹ (billion) times faster.

- Data Fidelity: Human brain high error rate (forgetting, bias, misinterpretation); ICN node near-zero error rate (error-corrected protocols); fundamentally lossless versus lossy.

Table 2 scales this comparison to the network level. It shows how the limitations of the individual nodes and communication protocols of the HCN are magnified across the system, while the advantages of the ICN compound to create a system of vastly superior power and scale.

Table 2: The Intelligence Network: HCN vs. ICN Metric Human-Cognitive Integrated Magnitude of

Network (HCN) Computational Difference (ICN Network (ICN) vs. HCN)

- Network Bandwidth: HCN ~100 bps per link (speech); ICN petabits/sec (fiber backbone); >10¹³ (ten trillion) times faster.

- Latency: HCN seconds to days (cognitive and social delays); ICN microseconds to milliseconds (speed of light); >10⁶ to 10⁹ times lower.

- Max Practical Network Size: HCN ~150 nodes (Dunbar’s cognitive limit); ICN virtually unlimited (billions of nodes); fundamentally unconstrained.

- Coordination Overhead: HCN high and grows non-linearly with size; ICN low and automated via software protocols; minimal and algorithmically managed.

- Scalability: HCN biologically static; ICN exponential (Moore’s/Nielsen’s Laws); dynamic and growing versus fixed.

The Causal Driver: Whitehead’s “Law of Unthinking” and the Metabolic Cost of Cognition

The transition from the HCN to the ICN is not a historical accident or a matter of simple technological preference. It is driven by a fundamental thermodynamic imperative to conserve scarce, metabolically expensive cognitive energy.2 In 1911, the mathematician and philosopher

Alfred North Whitehead articulated this profound insight: “Civilization advances by extending the number of important operations which we can perform without thinking about them”.2 This principle can be formalized as the Law of Unthinking (LoU).2

The LoU is grounded in thermodynamics. Conscious cognitive effort is a scarce, metabolically expensive resource. The human brain, while comprising only about 2% of body mass, consumes roughly 20% of the body’s resting energy, dissipating approximately 20 watts during focused thought.2 Whitehead compared these “operations of thought” to “cavalry charges in a battle—they are strictly limited in number, they require fresh horses, and must only be made at decisive moments”.2

This scarcity creates an intense evolutionary and civilizational pressure to conserve this finite resource. Automating an “important operation” by embedding it in a more efficient technological substrate is a thermodynamically favorable strategy. It minimizes the internal energy cost and entropy production required to maintain a system’s complexity, thereby freeing finite cognitive and energetic resources for further growth, innovation, and the tackling of higher-order challenges.2 The LoU is, therefore, the fundamental engine of progress.

This law is a neutral amplifier. When applied with narrow, unconscious goals—such as maximizing food surplus or material production—it leads to “Unthinking Exploitation”.2 The resulting entropic costs, such as deforestation, pollution, and climate change, are the predictable externalities of an unguided advance.2 This reframes environmental degradation not as a moral failing but as a physical consequence of applying a powerful law with incomplete information and limited objectives. The solution, therefore, is not to halt the engine of automation but to consciously aim it with a more complete set of goals, namely planetary health.2

Projecting the Divergence: The Accelerating Capability Gap The most critical distinction between the HCN and ICN is not their current state but their trajectory over time. The capabilities of the HCN are biologically fixed and have not changed meaningfully for millennia. The capabilities of the ICN, governed by the exponential laws of technological progress, are improving at an accelerating rate.1

A graphical projection of global computational capacity would show the HCN as a flat, horizontal line, while the ICN’s capacity would appear as a steep exponential curve, rapidly diverging from the HCN’s static line.1 Similar graphs for global data transmission and storage capacity would show the same accelerating divergence.1

The inescapable conclusion from these projections is that the capability gap between the two systems is not only large but is widening exponentially. Any strategy that relies on the HCN to solve future environmental challenges is akin to trying to cross a chasm that is growing wider every second using a plank of a fixed length.1 The approach is not just suboptimal; it is mathematically doomed to fail. This realization makes the transition to an ICN-based approach not simply a strategic choice, but an urgent and unavoidable necessity.

Part IV: The Inverted Stack: Architecture of a

Computer-Native Environmental Intelligence The analysis of the HCN’s architectural insufficiency and the thermodynamic imperative for its automation compels a transition to a new operational model. This new paradigm, the “Inverted

Stack,” is a computer-native architecture where machines handle the high-bandwidth tasks of computation and coordination, while humans are elevated to the high-value role of providing strategic and ethical direction.2 This section details the components of this proposed system.

The Infomechanosphere: A Planetary-Scale Computational Substrate The new paradigm requires a new planetary-scale computational substrate, an

“Infomechanosphere,” which is not a futuristic fantasy but an emergent property of existing, accelerating technological trends.2 We are already building its components, but largely in an uncoordinated, unconscious way. The “Invert the Stack” thesis is a call to become conscious architects of this process. Its primary components are rapidly maturing 2:

● Planetary Sensory Apparatus: This is the planet’s evolving nervous system, a global network of sensors providing real-time data. It includes vast arrays of Internet of Things

(IoT) devices, remote sensing satellites, and the emerging field of quantum sensing, which leverages quantum mechanics to achieve unprecedented precision in detecting pollutants or subtle geophysical changes.2

● Internal Model of Reality (Digital Twin Earth): This is the system’s dynamic, virtual replica of the planet, embodied in Digital Twin Earth (DTE) platforms. Major initiatives, such as the European Commission’s Destination Earth (DestinE), are already building high-fidelity digital models of Earth to monitor, simulate, and predict environmental changes by integrating vast streams of observational data with high-performance computing.2

● Actuation Mechanisms: These are the system’s “hands,” the diverse “environmental logic gates” that translate information into physical action. These interventions can range from AI-guided drones for precision reforestation to nanoscale physical barriers that selectively filter toxins from waterways.2

Environmental General Intelligence (EGI): The Negentropic Regulator The cognitive engine of the Infomechanosphere is Environmental General Intelligence (EGI).2

EGI is defined as a general intelligence grounded not in human affairs but in the dynamics of the natural world. It is an AI trained on vast environmental and spatial datasets with the explicit goal of understanding and optimizing ecological outcomes—to “think like an ecosystem,” not a person.2

This resolves a key tension in AI development. Instead of building an anthropocentric competitor to human cognition (AGI), EGI represents a truly complementary intelligence, one whose “mind” is structured for the planetary-scale, multi-variate, long-timescale systems thinking that the human brain is not evolutionarily optimized for.3 Within the proposed architecture, the EGI acts as the “negentropic regulator”.2 Its core function is to perform active inference on a planetary scale: continuously analyzing the DTE to forecast future states and identify “negentropic work”—interventions predicted to create environmental order and keep the Earth system within the safe operating space defined by the Planetary Boundaries.2

The distinction between these two forms of intelligence is crucial for the system’s safety and effectiveness, as clarified in Table 3.

Table 3: AGI vs. EGI: A Comparative Analysis of Intelligence Architectures Aspect Artificial General Environmental General

Intelligence (AGI) Intelligence (EGI)

Core Aim Achieve human-level Achieve general ecological general intelligence; intelligence; understand perform virtually any and model any aspect of intellectual task a human Earth’s environment at a can. 3 high level. 3

Primary Training Data Predominantly Predominantly human-generated data environmental and spatial

(text, images, records of data (climate records, human activity). 3 satellite imagery, ecological datasets). 3

Evaluation Benchmark Human-centric Eco-centric outcomes (e.g., performance (e.g., passing accuracy in predicting

Turing tests, solving environmental changes, human-designed tasks). 3 success in solving conservation problems). 3

Orientation Anthropocentric – Ecocentric – optimized for optimized for sustaining and enhancing human-defined goals and life systems. 3 utilities. 3

The concept of a powerful AI raises immediate concerns about safety and alignment. The distinction between AGI and EGI is critically important because it addresses these concerns head-on by defining EGI as a complementary, not competitive, intelligence. By clearly delineating the differences in aims, training data, and orientation, the framework reframes the

AI from a potential existential threat into a specialized, powerful tool for planetary stewardship.

Engineering Resilience: The Holographic Negentropic Framework (HNF)

The resilience and safety of the planetary intelligence system can be engineered into its fundamental architecture by applying principles from physics, specifically the Holographic

Principle.4 This principle, which emerged from the study of black hole thermodynamics, posits that the complete description of a three-dimensional volume of space can be encoded on its two-dimensional boundary.4

The Holographic Negentropic Framework (HNF) applies this concept as an architectural blueprint.2 The Digital Twin Earth is framed as the “holographic boundary” that encodes the full state of the planetary “bulk”.2 This is not merely a metaphor for data storage; it implies a crucial design principle for resilience. Modern research has shown that this holographic encoding is structurally analogous to quantum error-correcting codes (QEC), where logical information is stored non-locally and redundantly across many physical qubits, making the information robust against local errors or corruption.4

This connection provides a physical, not just ethical, solution to the AI safety problem. The conventional approach to AI alignment focuses on the intractable challenge of encoding ambiguous and evolving human values into software. The HNF, however, shifts the focus to engineering the system’s underlying information structure for inherent physical robustness. A holographically encoded system is resilient to single points of failure by design, because the information is distributed and redundant. This approach does not rely on telling the AI “don’t be evil”; it relies on building the AI such that a single point of failure—be it technical or logical—cannot cascade through the entire system. Safety becomes an emergent property of its fundamental physical architecture, providing a more robust solution than purely ethical or software-based constraints.4

Part V: The Physics of Viability and the Redefinition of

Human Purpose The proposal for a planetary-scale computational system necessitates a rigorous assessment of its physical feasibility and a clear-eyed examination of its implications for humanity. This final section analyzes the system’s viability through the lens of thermodynamics and explores how this technological transition fundamentally redefines and elevates the role of human consciousness.

The Thermodynamic Ledger: Balancing Entropic Costs and Negentropic Gains The ultimate viability of the entire architecture hinges on a strict thermodynamic accounting.

The system cannot violate the Second Law of Thermodynamics; the total entropy of the complete system (Intelligence + Environment + Surroundings) must not decrease: ΔS_Total = ΔS_Intelligence + ΔS_Environment ≥ 0.2 The system can be considered a net positive for planetary health if the value of the created environmental order (the negentropy, represented by −ΔS_Environment) is judged to be greater than the cost of the generated systemic disorder (ΔS_Intelligence).2 This fundamental trade-off is summarized below.

The Thermodynamic Ledger of Planetary Thriving pairs entropic costs (debits, ΔS_Intelligence > 0) with negentropic gains (credits, −ΔS_Environment > 0):

- Sensing (measurement cost): continuous entropy generation from operation of the global sensor network to acquire information. Pollution sequestration: reduction of physical disorder by concentrating and neutralizing dispersed pollutants.

- Computation (Landauer cost): massive energy dissipation as waste heat from data centers running the EGI and DTE. Biodiversity restoration: creation of complex, information-rich biological structures in ecosystems like forests and reefs.

- Actuation (work cost): inefficient conversion of energy to work when operating environmental intervention technologies. Climate stabilization: maintaining Earth’s energy balance within a stable, low-entropy state conducive to life.

- Energy source (conversion cost): inevitable entropy production from the power plants that supply the entire system with low-entropy energy. Systemic resilience: increasing the information content and feedback loops within Earth systems, making them more stable and predictable.

Proposing a planetary-scale computational system immediately raises questions about its own environmental footprint. This ledger directly confronts that issue by framing the system’s viability in the rigorous, non-negotiable language of thermodynamics. A quantitative example demonstrates the physical plausibility of this trade-off. Assuming a global EGI consumes 1000 TWh of energy annually, its computational operation would generate an entropy cost of approximately +1.2 × 10¹⁶ J/K per year.5 Concurrently, sequestering 10 gigatonnes of atmospheric CO₂ per year—a high-value negentropic task—would create an environmental credit of approximately −2.75 × 10¹⁶ J/K per year.5 By showing that the potential negentropic gains are of the same order of magnitude as the entropic costs, this analysis establishes the physical plausibility of the entire concept, transforming it from science fiction into a tractable, long-term engineering challenge.

The Breakeven Point: The Path to Net-Positive Planetary Impact The viability of the system is a function of its thermodynamic efficiency, which will improve over time, implying the existence of a “thermodynamic breakeven point”.2 The initial construction and training of the Infomechanosphere will have a massive, front-loaded entropic cost. However, the operational efficiency of information processing and energy conversion has historically improved at an exponential rate, a trend captured by observations like Koomey’s Law, which describes the doubling of computational energy efficiency roughly every 2.6 years.5

This suggests that the system’s operational entropy cost per unit of negentropic work created (ΔS_Intelligence / |−ΔS_Environment|) will decrease over its lifetime.2 This leads to a breakeven point, after which the cumulative negentropic benefit to the planet begins to outweigh the cumulative entropic cost of the system’s existence and operation.2 The system is, in essence, an “information engine” that can apply its own intelligence to optimize its own efficiency—improving sensor design, creating more efficient algorithms, and optimizing energy grids. It is a system designed to get better at the very task of creating order, ensuring its long-term thermodynamic viability.

The Elevation of Humanity: From Operators to Architects of a Thriving World The “Inverted Stack” does not lead to human obsolescence; it leads to human essentialization, clarifying and elevating the unique functions of consciousness.2 By automating the operational “how” of planetary management, the system conserves Whitehead’s precious

“cavalry charges” of conscious thought for their highest and most essential purpose: defining the “why”.2

The future of human work shifts decisively from analysis and execution to the formulation of values, goals, and moral constraints.2 The new core functions for humanity are Aiming the system by setting its overarching purpose, Overseeing its strategic direction, and

Embedding Ethics by engaging in the moral deliberation required to set its constraints.2 Our future role is not to compete with our increasingly capable “unthinking” systems, but to provide the conscious, thinking vision that gives them direction. We evolve from being planetary engineers to being planetary philosophers and moral architects.3

This transition should not be viewed as an incremental change but as a fundamental phase transition for the planet, analogous to the emergence of multicellular life or the Cambrian explosion.2 It represents the point at which the biosphere develops a coherent, high-bandwidth nervous system (the global sensor network) and a coordinating brain (the

EGI), enabling an entirely new level of planetary self-regulation.2 This reframes the entire project not just as an environmental solution, but as a major evolutionary step for life on Earth, with humanity acting as the conscious catalyst.

Conclusion

This first-principles analysis leads to a series of interconnected and profound conclusions.

The current paradigm of planetary management, the Human-Cognitive Network, is architecturally and mathematically insufficient for the complexity of the Anthropocene. Its biologically static, low-bandwidth, and high-latency nature renders it incapable of managing the data-intensive, real-time challenges of the 21st century. The environmental crises we face are the physical manifestation of a system operating beyond its design limits.

The transition to an Integrated Computational Network, or “Inverting the Stack,” is not a matter of choice but a thermodynamic and informational necessity. It is driven by the relentless pressure of the Law of Unthinking to automate complex, energy-intensive cognitive work and is enabled by an exponentially widening capabilities gap between human and machine intelligence. The ICN, with its programmable exascale processing, lossless petabyte-scale storage, and near-instantaneous global communication, is the inevitable successor substrate for environmental intelligence.

The proposed architecture—an Infomechanosphere regulated by an ecocentric Environmental

General Intelligence and engineered for resilience via the Holographic Negentropic

Framework—is not a violation of physical law but a sophisticated information engine designed to operate within thermodynamic constraints to maximize the creation of environmental order.

Its viability is a tractable engineering challenge, contingent on reaching a “thermodynamic breakeven point” where the negentropic gains for the planet outweigh the entropic costs of the system’s operation.

This transition fundamentally redefines and elevates humanity’s purpose. By automating the operational “how,” it liberates human consciousness to focus on the normative “why.” Our future role is not to be anxious wardens of a fragile museum but to become joyful, co-creative gardeners of a living, evolving planet. This represents a planetary phase transition, enabling a new level of self-regulation and flourishing. It is a call to consciously align our vast technological and creative potential with the fundamental negentropic processes of the universe, becoming what we were always meant to be: the conscious co-architects of a thriving, living world.

References

A comprehensive list of all sources cited in the research material 1 would be compiled here in a standard academic format (e.g., APA, MLA), organized alphabetically by author or title. For the purpose of this report, the in-text citations [source_id] refer to the provided research materials.

Works cited

1. Human vs. Computer Environmental Intelligence

2. Inverting the Stack_ Environmental Intelligence (1).pdf

3. Environmental Paradigm Shift Research Report

4. From Fear to Flourishing: An Architecture for Planetary Thriving in the Information

Age

5. Compute Together, Stay Together: A First-Principles Analysis of Universal

Computation and the Negentropic Imperative for Alignment

6. TOP500 List - June 2025, accessed October 25, 2025,

https://top500.org/lists/top500/list/2025/06/

7. TOP500 - Wikipedia, accessed October 25, 2025,

https://en.wikipedia.org/wiki/TOP500 8. 4 Types of Big Data Technologies (+ Management Tools) - Coursera, accessed October 25, 2025, https://www.coursera.org/in/articles/big-data-technologies 9. rivery.io, accessed October 25, 2025, https://rivery.io/blog/big-data-statistics-how-much-data-is-there-in-the-world/#

:~:text=In%202024%2C%20the%20global%20volume,by%20the%20end%20of% 202025.

10. Japan sets new world record for internet speed: 4 million times faster than the US

average, accessed October 25, 2025, https://www.earth.com/news/japan-sets-new-world-record-for-internet-fiber-sp eed million-times-faster-than-us/ 11. “Speed Never Seen Before”: Scientists Smash Optical Fiber Record With a Breakthrough That Redefines Global Internet Potential - Rude Baguette, accessed October 25, 2025, https://www.rudebaguette.com/en/2025/06/speed-never-seen-before-scientistssmash-optical-fiber-record-with-a-breakthrough-that-redefines-global-internet -potential/

12. Aston University researchers break ‘world record’ again for data transmission

speed, accessed October 25, 2025, https://www.aston.ac.uk/latest-news/aston-university-researchers-break-world-r ecord-again-data-transmission-speed

13. World Record 402 Tb/s Transmission in a Standard Commercially Available Optical

Fiber | 2024 | NICT - National Institute of Information and Communications Technology, accessed October 25, 2025, https://www.nict.go.jp/en/press/2024/06/26-1.html

14. Cosmic Computation and Alignment

15. From Protection to Flourishing: A First-Principles Analysis of a Negentropic

Paradigm for Planetary Stewardship

Licensed CC-BY-4.0 .

Markdown source: https://jedanderson.org/essays/scaling-imperative-hcn-vs-icn.md

Source on GitHub: /src/content/essays/scaling-imperative-hcn-vs-icn.md

Cite this

@misc{anderson_2025_scaling_imperative_hcn_vs_icn,

author = {Jed Anderson and Grok 4 Deep Research and ChatGPT5 Deep Thinking and Google Gemini 2.5 Deep Research},

title = {The Scaling Imperative: A First-Principles Comparison of Human-Cognitive and Integrated Computational Networks for Planetary-Scale Intelligence},

year = {2025},

url = {https://jedanderson.org/essays/scaling-imperative-hcn-vs-icn},

note = {Accessed: 2026-05-13}

} Anderson, J., Research, G. 4. D., Thinking, C. D., Research, G. G. 2. D. (2025). The Scaling Imperative: A First-Principles Comparison of Human-Cognitive and Integrated Computational Networks for Planetary-Scale Intelligence. Retrieved from https://jedanderson.org/essays/scaling-imperative-hcn-vs-icn

Anderson, Jed, Research, Grok 4 Deep, Thinking, ChatGPT5 Deep, Research, Google Gemini 2.5 Deep. "The Scaling Imperative: A First-Principles Comparison of Human-Cognitive and Integrated Computational Networks for Planetary-Scale Intelligence." Jed Anderson, October 25, 2025, https://jedanderson.org/essays/scaling-imperative-hcn-vs-icn.